On ImageNet dataset, our approach can achieve $40.8\%$ and $44.8\%$ storage and computation reductions, respectively, with $0.15\%$ accuracy increase over the baseline ResNet-50 model. Notably, on CIFAR-10 dataset our solution can bring $0.75\%$ and $0.94\%$ accuracy increase over baseline ResNet-56 and ResNet-110 models, respectively, and meanwhile the model size and FLOPs are reduced by $42.8\%$ and $47.4\%$ (for ResNet-56) and $48.3\%$ and $52.1\%$ (for ResNet-110), respectively. Our evaluation results for different models on various datasets show the superior performance of our approach.

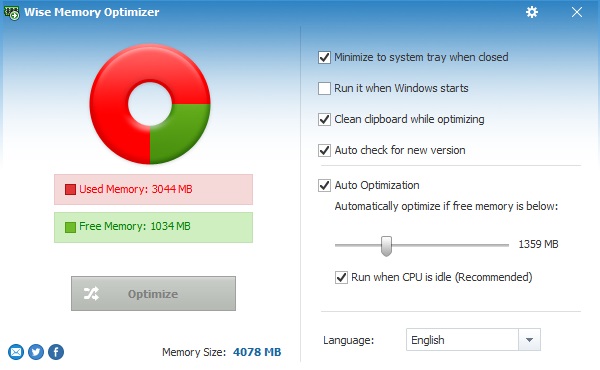

Download Wise Memory Optimizer for Windows to free up physical memory and. Fixed the problem that the Wise Memory Optimizer cannot free up memory in Windows 64 bit system. It is possible to use some settings so that the program works automatically, optimizing the use of RAM whenever a certain quantity is occupied. Wise Memory Optimizer keeps on its improvements, and the newly released notes are as follows: Adopted a new UI, which perfectly solves the problem of interface blur caused by the system scaling setting. You need to give just one click for this to be done within a few seconds. We systematically investigate the quantification metric, measuring scheme and sensitiveness$/$reliability of channel independence in the context of filter pruning. This board is a single-chip, PCI Express to four SATA Gen III 6Gb/s channels. Wise Memory Optimizer is a smart system for optimizing RAM memory that defies the amount of this feature currently used by the PC. The less independent feature map is interpreted as containing less useful information$/$knowledge, and hence its corresponding filter can be pruned without affecting model capacity. In this paper, starting from an inter-channel perspective, we propose to perform efficient filter pruning using Channel Independence, a metric that measures the correlations among different feature maps.

To date, most of the existing filter pruning works explore the importance of filters via using intra-channel information. Abstract: Filter pruning has been widely used for neural network compression because of its enabled practical acceleration.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed